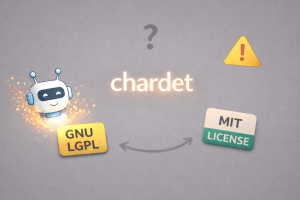

Can AI be used to reimplement software with nearly the same functionality, and then even change its license as a result, in what might be described as a form of license washing? This issue has been discussed repeatedly for some time. Yet, setting aside discussions of reimplementation for research purposes, I do not believe there had previously been a real case in which a large-scale reimplementation was carried out for a practical tool and, moreover, the license was changed while keeping the same project name. In March 2026, however, precisely such a situation arose with chardet, a Python character encoding detection library. In the public materials, chardet 7.0.0 is positioned as a “Ground-up, MIT-licensed rewrite,” and its license has been changed from the GNU LGPL to the MIT License. Mark, who claims to be the original author of chardet, has objected to that relicensing, and this has become the starting point of a major controversy. This article analyzes the matter with a focus on whether that license change was justified.

This article is organized solely on the basis of the published release notes, the rewrite plan and rewrite design, the issue, and the external form of other public files. It does not attempt a precise comparison or analysis of the substantive similarity of the source code as a whole. Accordingly, this article should be read not as a definitive legal conclusion, but as a legal and practical analysis based on what can be seen from publicly available materials.

- What Actually Happened with chardet

- Organizing the Issues

- The Reimplementation Process as Seen from the Rewrite Plan and the External Form of Public Files

- Conclusion

- Final Remarks

- References

Note: This article is an English translation of the original Japanese text, with some parts translated using an LLM. I believe it will also be useful for English speakers.

What Actually Happened with chardet

chardet is a Python character encoding detection library. In the official Changelog, version 1.0 from 2006 is described as a “Python 2 port of Mozilla’s universal charset detector” In other words, the original starting point was a port of an implementation from the Mozilla lineage, and its original author was Mark Pilgrim, hereafter Mark.

The project was then maintained for many years, and most recently version 6.0.0 was released on February 22, 2026. Version 6.0.0 was still part of the old line of chardet, and the release materials for 7.0.0 explicitly state that “previous versions were LGPL” The AI-based reimplementation that gave rise to the present controversy, version 7.0.0, was released on March 2, 2026, and just two days later, on March 4, version 7.0.1 was released as an ordinary bug fix and improvement release. In other words, the 7.x line was not a one-off experiment. It has been developed as a continuing release line.

The public Changelog and README for the controversial 7.0.0 are notably candid. They describe 7.0.0 as a “Ground-up, MIT-licensed rewrite of chardet. Same package name, same public API,” openly emphasizing that the project switched to the MIT License while keeping the same name and the same API. The rewrite plan, which served as instructions to the AI, also explicitly states as its goal: “Build a ground-up, MIT-licensed, API-compatible replacement for chardet 6.x.” The basic position that can be read from the public materials on the side of the current maintainer Dan Blanchard, hereafter Dan, is that because a separate implementation with the same public API was created from scratch, it can be treated as MIT-licensed.

In response, on March 4, 2026, Mark opened an issue in the project and identified himself as the original author of chardet. He argued that the current maintainers of 7.0.0 had no right to “relicense” the project and that this was an explicit violation of the GNU LGPL. He further stated that the maintainers had long had sufficient access to the old code and that this was not a clean room implementation, and he demanded that the original license be restored. As of March 9, 2026, the issue remained open, with neither an Assignee nor a Milestone attached. Public tags show that Mark’s involvement can be confirmed at least through version 1.0.1 in 2008, and there is a strong possibility that copyright at least remains in Mark’s original contributions.

note: The GitHub account claiming to be Mark is almost empty, and I cannot confirm whether it is actually him. Mark Pilgrim is also said to have retired from public internet activity in 2011. For the purposes of this article, however, I proceed on the assumption that the post was made by him.

Organizing the Issues

From the perspective of the original author Mark, the central issue is copyright infringement. If 7.0.0 constitutes a copying or adaptation of the older chardet, or in the language of United States law, a “derivative work,” then the explanation that it could be taken outside the scope of the LGPL and turned into MIT-licensed software becomes very difficult to sustain. LGPL 2.1 itself provides that one may not copy, modify, sublicense, or distribute the Library or its derivative works outside the scope of the license, and that any such attempt is void and automatically terminates one’s rights. It also treats a person as having accepted the license by modifying or distributing the Library or a work based on it.

Of course, aside from copyright infringement, the original author’s side might also raise a contractual claim if the LGPL is viewed as a contract, and might further raise separate arguments concerning use of the name and continuity on the basis of common law trademark rights or unfair competition. Still, because Dan’s side can argue that “everything was rewritten as an independent implementation,” the real core of the matter is ultimately whether copyright infringement occurred. Contract arguments may serve as a secondary line of analysis, but if 7.0.0 truly is an independent implementation that is neither a copy nor an adaptation of the prior copyrighted work, then it suddenly becomes difficult to determine how far the LGPL can reach. Conversely, if copying or adaptation is recognized, the LGPL would immediately carry substantial weight.

For that reason, this article proceeds on the premise that the central issue in this matter is copyright infringement.

The Reimplementation Process as Seen from the Rewrite Plan and the External Form of Public Files

Because this requires consideration of whether copyright infringement has occurred, I should make clear at the outset that I am not a specialist in source code analysis. If pursued to the end, this case likely would require analysis along the lines of the AFC test established in Computer Associates v. Altai, but that lies in the specialist domain of code comparison and is not where I should claim expertise. Accordingly, I limit myself here to organizing how far the publicly available rewrite plan and the external form of other files reveal circumstances of dependence, and how far they permit an inference of similarity.

Assessment from the Perspective of Dependence(ikyosei)

Under Japanese law, discussion of whether copyright infringement has occurred is typically organized around the Supreme Court’s framework of dependence and similarity. Under United States law, the analysis is divided into somewhat finer stages and discussed in terms of “access, copying, substantial similarity, protectable expression,” but in practical terms the key questions remain: did the later work come into contact with the earlier work and use it as its basis, and did it take in expression protected by copyright? Moreover, under 17 U.S.C. §102(b), the software’s “idea, procedure, process, system, method of operation” is not protected. What is protected is expression, and expression alone. The line of cases including Google v. Oracle has once again shown how important that limitation is when speaking of copyright infringement in software.

Against that background, if one looks at the contents of the publicly available rewrite plan used for the reimplementation, Mark’s argument as the original author appears fairly persuasive on the issue of dependence. In Task 3, the Encoding Registry section states that “Era assignments MUST match chardet 6.0.0’s chardet/metadata/charsets.py.” It goes on to say, “Fetch that file and use it as the authoritative reference,” and even, “Reference the chardet 6.0.0 charsets.py file … for the complete list of encodings and their era assignments.” In other words, at least for the registry-related portions, there are instructions explicitly to look at the old charsets.py and to make the new implementation match it using that file as an authoritative reference. That is a substantial factor weakening any claim of a clean room implementation.

The rewrite plan also includes procedures for obtaining test data. In the final 7.0.0 version’s scripts/utils.py, there is a mechanism that shallow clones tests/data from https://github.com/chardet/test-data.git . The README in that test-data repository says that the data were “pulled out into its own repo since licensing can be an issue,” and states that the copyrights in the individual test files belong to their respective publishers. What this shows is that the maintainers who carried out the reimplementation were themselves, at a minimum, conscious of licensing issues concerning the test data.

Dan himself also explained the reimplementation process carefully in comments on Mark’s issue. There he said that he began from an empty repository with no access to the old source tree, and that he explicitly instructed Claude not to base any work on LGPL or GPL licensed code. It is true that the rewrite design contains such instructions, but once the rewrite plan itself contains references to the old materials, it becomes difficult to say there was no dependence.

There is also a more fundamental point to consider, namely the possibility that the AI itself had used the code as training data. Anthropic’s official materials explain that Claude was trained on mixed data that included public information from the internet. On the other hand, the individual training datasets are not public, so it cannot be confirmed whether the chardet code that had been published on GitHub was part of the training data. Still, that possibility itself cannot be ruled out. In Japan, Professor Okumura of Keio University has described this in terms of “two-stage dependence,” meaning human dependence and AI dependence. If the AI itself had already been trained on the code, then one could argue that the AI was already dependent on that code, and that this too prevents a clean room implementation from being established.

Taken together, these facts make it difficult to say that the reimplementation was not dependent on the original implementation. Even from public materials alone, one can see a considerable degree of contact with and reference to the old project. My own view is that it is difficult to describe this as a clean room implementation in any strong sense. Generally speaking, a clean room implementation at the very least separates specification and implementation in a way that does not directly touch the expression of the original implementation, and in that sense as well the rewrite plan in this case weakens that claim.

Assessment from the Perspective of Similarity

Even so, the fact that dependence appears strong does not mean that copyright infringement immediately follows. Under United States law, copyright analysis is more exacting than under Japanese law in that it examines closely what counts as protectable expression. In Feist v. Rural, the Court held that factual compilations are not automatically protected as such, and that even where they are protected, protection extends not to the facts themselves but only to the original aspects of selection, coordination, and arrangement. Further, Computer Associates v. Altai held that in cases of non-literal infringement of software, one first filters out unprotectable elements through the abstraction-filtration-comparison test. In other words, under United States law, it is not enough that the logic or functionality of a program is similar. One must examine strictly whether the similarity concerns protectable creative expression.

Looking then at the older 6.0.0 charsets.py, which the rewrite plan explicitly instructed the AI to reference, one finds a metadata table in which the Charset dataclass contains name, is_multi_byte, encoding_era, and language_filter. By contrast, the new 7.0.0 registry.py uses EncodingInfo and has a more expanded metadata structure containing era, is_multibyte, python_codec, languages, and other fields. On their face, the two are similar in that both are tables containing attributes for each encoding, but their field structure and form of expression are not identical.

At a more basic level, much of charsets.py is itself a metadata table. One can therefore argue that it has a factual character and is not protectable expression. On the other hand, if human creativity is recognized in the selection, classification, and assignment embodied in that table, then if those elements were copied and substantially incorporated into registry.py, there would remain room for a finding of similarity. The important point here is that much of the set of encoding names, the concept of eras, the corresponding Python codec names, and the mapping to languages also lies in an area close to function, standards, or fact. Even if registry.py was created with reference to the old charsets.py, that alone is not enough to say that the reimplemented 7.0.0 as a whole is a copying or adaptation of the old chardet. Under United States law, the question is not simply whether they are similar, but whether the similar parts are protectable expression. From Dan’s side, the central response would be that even if the rewrite plan refers to the old version, that reference was only for functional compatibility or data consistency and does not mean that protectable expression was taken.

Dan’s side also reported in reply to Mark’s issue that they used JPlag, a source code plagiarism detection tool, to compare the reimplemented 7.0.0 with prior versions and found that the maximum similarity was 1.29%. Considering that the maximum similarity between other versions ranged from 43% to 93%, this could support a quantitative assessment that the code as a whole shows low similarity. In practice, many developers would likely regard it as a completely different implementation, and such analysis may well have some persuasive force in a copyright dispute over source code. Still, because copyright infringement can arise from the taking of only a very small amount of protectable expression, this kind of similarity measurement should be treated as persuasive evidence, not as a conclusive answer.

Once these points are organized, one must admit that the conclusions that can be drawn from public materials alone are limited. It seems likely that the reimplemented 7.0.0 depended on the original code, but how much protectable expression it took in cannot be determined easily from external appearance alone. Beyond this lies the specialist domain of code comparison from a legal perspective, and it is not something that I should claim to resolve by speculation.

Conclusion

If I state my provisional assessment from public materials first, this is a case in which it is difficult to say that the reimplemented 7.0.0 is clearly an independent implementation, yet there is not enough to conclude immediately that it is copyright infringement.

Legal Assessment

From a legal perspective, even the currently public materials contain a fair number of points favorable to Mark as the original author. In particular, the instructions in the rewrite plan to use the old charsets.py as an authoritative reference are significant. The grounds for asserting that this was a clean room implementation appear weak, and at least with respect to access, or in Japanese terms something close to dependence, Mark’s position appears more persuasive.

Even so, it is too early to conclude from that alone that copying or adaptation has been established. Under United States law, one must show the taking of protectable expression, not merely ideas or procedures, and where the material is a compilation, protection may be thin. This level of analysis is not enough to say that the reimplemented 7.0.0 as a whole is a derivative work of the old chardet. Put differently, if detailed code comparison were to show that the reimplementation contains little or no protectable expression, Dan’s theory of independent implementation could still stand.

There is a strong basis for viewing charsets.py itself as a file newly created and developed by Dan in the 6.0.0 line, and to that extent it can be argued to be Dan’s work. That said, this does not mean Dan has the right to dispose of the rights in the old chardet project as a whole. If charsets.py is a derivative work dependent on prior copyrighted material, then the rights recognized in Dan would extend only to the newly added portions, and could not extinguish the rights of Mark and other existing rights holders. At the same time, it is entirely possible both that charsets.py may qualify as Dan’s work in the 6.0.0 line and that using it as an authoritative reference as the basis for the reimplementation weakens the clean room character of the process.

Accordingly, even though this looks only at a very small part of the implementation, if one focuses on charsets.py, where dependence is clearly shown in the AI instructions, both Mark’s position as original author and Dan’s position as current maintainer have real strengths when considering possible copyright infringement. At least with respect to dependence, Mark’s argument appears strong. If it were proven that protectable expression was incorporated into the new implementation, then infringement could be established, and the conduct could at the same time amount to a license violation. From Dan’s side, one possible response is that his own contribution to that file is substantial. Another possible response is that it contains only facts and not protectable expression. Still another is that even if the original author’s rights did reach the file, the reimplementation as a practical matter did not take in expression. Again, this is the sort of case that requires specialist analysis for proof, and it would not be surprising if different jurisdictions reached different conclusions. What can be said clearly is that this reimplementation is, at the very least, difficult to describe as a clean room implementation in any strong sense. Beyond that, it is difficult to make a firmer judgment at present.

Further, even if copyright infringement were rejected across the board, contract-based arguments would not disappear entirely, and there could still be disputes from that angle. LGPL 2.1 presupposes acceptance through modification and distribution, and in the United States it has long been debated whether violation of Open Source license conditions should be treated as contract or as copyright infringement. In Artifex v. Hancom, both breach of contract and copyright infringement were asserted in connection with the GPL, and in SFC v. Vizio, contract-related issues under the GPL and LGPL remain ongoing. Even so, copyright infringement remains the central issue here, and this is not the kind of matter that can be resolved entirely through contract theory alone. Still, if AI-based reimplementations are indeed going to increase dramatically, then we should keep in mind that future analysis may need to avoid treating copyright infringement as the only relevant lens.

Assessment from the Perspective of Community Governance and Ethics

The ability to keep the same repository name and the same distribution route on GitHub is a different matter from having the right to relicense the implementation of the old project as a whole on one’s own judgment. The former can affect downstream users in many ways, especially where the community is large, and for that reason community-governance and ethical evaluation should be more demanding than purely legal evaluation. Even if a court were ultimately to conclude that this case does not rise to copyright infringement, that would not automatically justify treating software that serves the same function but is described as a reimplementation, in other words a different program, as an update within the same project under the same name.

In its public documents, 7.0.0 itself claims to have the “Same package name, same public API.” Even after Mark stated his objections in the issue, 7.0.1 has continued to be developed as a normal bug fix and improvement release. At this point, Mark’s objections have at least not been accepted, and the new line continues forward while claiming to be the successor to the project.

In my own view, leaving aside whether the matter is legally black or white, this should originally have been released as a separate tool. If one carries out a full reimplementation using AI, completely changes the internal architecture, and even changes the license from copyleft to permissive, then it no longer seems to me to be a mere “update.” If the same project name absolutely had to be preserved, then restoring the original license and reducing the community’s doubts about continuity would at least have caused less confusion.

Final Remarks

AI-driven reimplementations will almost certainly increase dramatically from here forward. But that does not mean that an existing project may be released as an update under the same name. The very fact that there remains room for legal dispute means the process should be approached cautiously, and even if the result were legally permissible, it would still create major confusion for organizational compliance and for downstream users’ understanding. It is true that some people will welcome a shift from copyleft to permissive licensing, but they would do well to recognize that the same method could also be used to move from Open Source to non Open Source.

My own sense is that a complete AI-based reimplementation should in principle be treated as a separate tool. At the very least, one should not blur together, under the same project and the same release line, a product of long-term human development and a separate AI reimplementation. The license too should be chosen in a way that fits the code’s provenance and character, and conduct that makes it appear continuous with the original human-created software should be avoided. Further, if a full AI-based reimplementation is to be released as the successor to an existing project, then there is a duty of explanation, owed to all rights holders who contributed to the old implementation and to downstream users, to make clear at the time of release the code’s provenance, the scope of the old assets that were referenced, the basis for the license change, the relationship with prior rights holders, and the scope of compatibility and incompatibility.

References

chardet 7.0.0 release: https://github.com/chardet/chardet/releases/tag/7.0.0

chardet 7.0.1 release: https://github.com/chardet/chardet/releases/tag/7.0.1

No right to relicense this project, Mark Pilgrim: https://github.com/chardet/chardet/issues/327

Dan’s response: https://github.com/chardet/chardet/issues/327#issuecomment-4005195078

Chardet Rewrite Implementation Plan: https://github.com/chardet/chardet/blob/925bccbc85d1b13292e7dc782254fd44cc1e7856/docs/plans/2026-02-25-chardet-rewrite-plan.md

Chardet Rewrite Design: https://github.com/chardet/chardet/blob/925bccbc85d1b13292e7dc782254fd44cc1e7856/docs/plans/2026-02-25-chardet-rewrite-design.md

GNU Lesser General Public License, version 2.1: https://www.gnu.org/licenses/old-licenses/lgpl-2.1.html.en

17 U.S. Code § 102: https://www.law.cornell.edu/uscode/text/17/102

Explanation of “two-stage dependence” by @OKMRKJ: https://x.com/OKMRKJ/status/1702944463314907400